AI Is Helping PMs Do the Wrong Things Faster

The PMs who win in the AI era won’t be the ones who process the most data. They’ll be the ones who ask the right questions — of the data and of their customers.

Most PMs using AI for customer research are doing the wrong things faster.

The most common setup I see right now: an AI agent that tallies feature requests across sales calls, support tickets, and customer transcripts. Clean ranked list. Updated in real time. Zero manual effort.

It feels productive. But it’s automating confirmation bias at scale.

Feature Requests Are Symptoms, Not Diagnoses

Feature requests are surface-level data. They tell you what customers think they want — not what they actually need. They reflect the solutions customers can imagine, which are always limited by what they’ve already seen.

This is the “faster horses” problem, dressed up in modern tooling. The observation attributed to Henry Ford has never been more relevant: if you ask people what they want, they’ll describe a slightly better version of what they already have. AI lets you aggregate those descriptions faster than ever. But speed doesn’t fix the underlying problem — you’re still listening to answers that come from the wrong questions.

When you use AI to synthesize feature requests, you get a beautiful, well-organized view of the surface. What you don’t get is any understanding of why those requests exist — which users feel the pain most acutely, what constraints they’re working within, and whether the features they’re asking for would actually solve the underlying problem.

What Happened When We Asked Different Questions

When I was leading discovery for Shutterstock Editor, we didn’t start by asking people what features they wanted in a photo editing tool. That question would have produced a predictable list: layers, filters, templates, text tools — the same features every editing tool already offers.

Instead, we asked about their goals. What were they making with the images they downloaded from Shutterstock? What were they using the edited images for? We asked about their jobs and how much time they had. We asked how confident they felt in their design skills.

These questions had nothing to do with the product category. They had everything to do with the customer’s world.

What we found was a segment that no feature request tally would have surfaced: people who needed to produce designed content but didn’t identify as designers. Bloggers. Social media managers. Small business owners putting together presentations. They had limited time and no patience for complex tools. Design work wasn’t their core job function or passion — they wanted the design step to take as little time as possible so they could get back to the work that actually mattered to them.

That insight is what let us scope an MVP around a specific, underserved segment instead of trying to build a Photoshop competitor for everyone. We launched to rave reviews from the target users who told us it saved them time. The fringe users — the ones who needed more — asked for exactly the features our research had predicted they’d want. Usage grew 10x in the first year.

No amount of feature request tallying would have gotten us there. The insight came from understanding outcomes and constraints, not from counting what people asked for.

What You Should Be Pointing AI At

AI is incredible at synthesizing large volumes of data. The question isn’t whether to use it — it’s what you point it at.

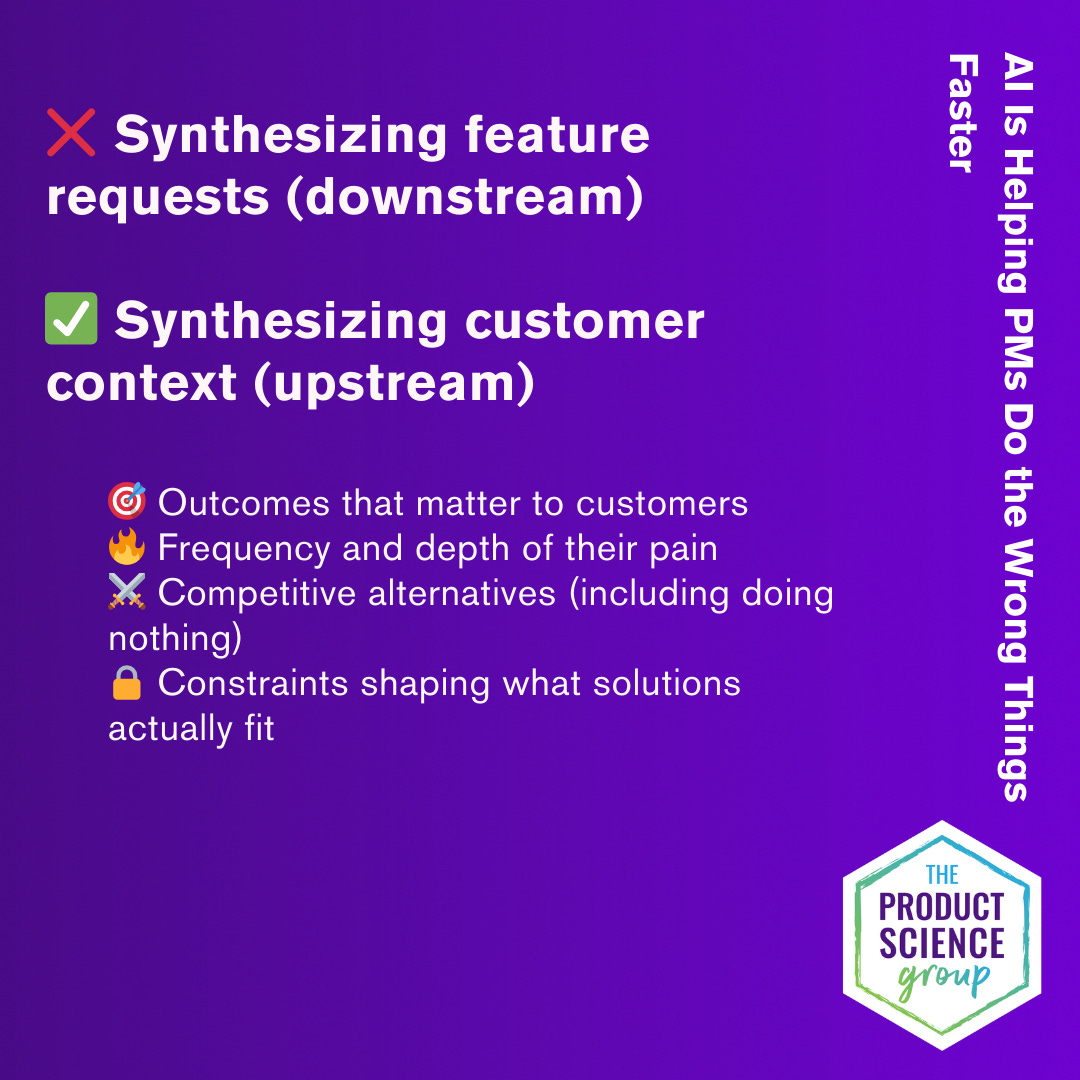

Most teams point AI at feature requests and bug reports. That’s downstream data. It tells you what people are reacting to in the product as it exists today. It’s useful for local optimizations — fixing what’s broken, smoothing what’s rough.

What you should be pointing AI at is the upstream context that helps you understand what products would actually be useful:

The outcomes that matter to customers — not what features they want, but what they’re trying to accomplish in their work and life

The frequency and depth of their pain — how often they hit the problem, and how much it costs them when they do

The competitive alternatives they’re considering — including the option of doing nothing, which is your most common competitor

The constraints they’re working under — time, budget, skills, organizational dynamics, technical limitations — the factors that determine whether a solution will actually fit into their world

AI can find traces of all of this in the data you already have — in sales call transcripts, in support conversations, in customer success notes, in usage patterns. But only if you ask it the right questions. An AI agent asked to “identify the top feature requests” will do exactly that, beautifully. An AI agent asked to “identify the most common unmet outcomes and the constraints preventing customers from reaching them” will give you something fundamentally more useful.

10% Moves vs. 10x Moves

Tallying feature requests to make local optimizations — that’s entry-level product work. It produces 10% improvements. There’s nothing wrong with it, and every product needs some of it. But it’s not where senior PMs should be spending their time and attention.

Understanding pain depth, frequency, competitive alternatives, and adoption constraints — that’s what produces the 10x moves. The ones that change the trajectory of a product. The ones that find a new segment, redefine a value proposition, or reveal that the biggest opportunity is somewhere nobody was looking.

If you’re a senior PM, a staff PM, a product leader, and your primary use of AI is counting feature requests, you’re flying at the wrong altitude.

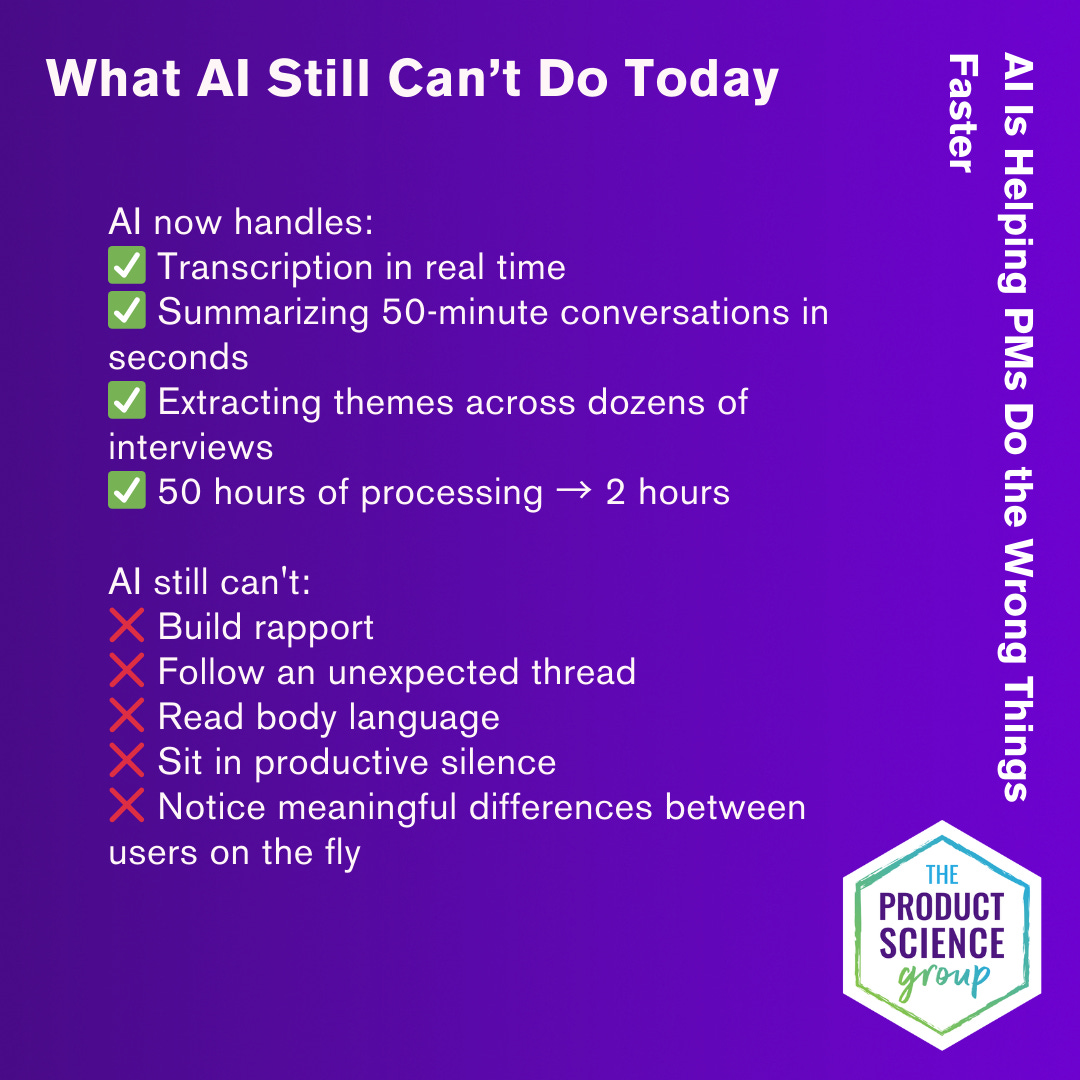

The Interview Still Matters Most

Here’s the thing AI can’t do: sit across from a customer and notice that they said “it’s fine” but didn’t mean it. Follow an unexpected thread because something in their tone of voice told you there was more to the story. Build enough rapport that someone admits they’ve been doing a workaround they’re embarrassed about. Notice that this person’s experience of the problem is fundamentally different from the last person’s, and adjust your questions on the fly to understand why.

Discovery interviews — real conversations with real people about their real lives — are still the highest-value thing a product manager can do. AI has made everything around the interview faster: transcription, summarization, theme extraction, pattern recognition across dozens of conversations. What used to take fifty hours of processing can now be done in two.

But the interview itself is irreducibly human. And it matters more now, not less. When every company has access to the same AI-generated market data, the same AI-summarized support tickets, the same AI-clustered usage patterns, the competitive advantage shifts to whoever has proprietary insight that AI can’t generate. That insight comes from direct conversation. It comes from asking the questions that surface what you don’t know yet — not the questions that confirm what you already believe.

AI hasn’t changed what great discovery looks like. It’s changed how fast you can do it — for better or worse. The PMs who win in the AI era won’t be the ones who process the most data. They’ll be the ones who ask the right questions — of the data and of their customers.

I’ve been thinking about this a lot as I write my book, The Product Science Principles — a guide to the repeatable, science-based methodology behind high-growth products. These ideas are also at the core of Ada, an AI coaching tool I recently built to help PMs sharpen their discovery interview guides. Because the questions you ask determine whether you find 10% insights or 10X ones.

If you want to try Ada or hear more about the book as it develops, reach out — I’d love to hear what you’re working on.

When customers describe a solution, they are almost always wrong. When customers describe a problem, they are almost always right. The key, as you've said, is personal experience with people who buy and use your products. Not salespeople. Not developers. Not executives. Customer Conversations have been the pattern for product management success since I started decades ago.

Spot on. I’ve been prioritizing customer interviews over everything recently. Synthesized data from these conversation are the best thing you can share that resonate with leadership. Just be sure to mentioned you used AI to help.